Waiting for meeting to start...

Meeting summary will appear here

Context history will appear here

No intents detected yet

Polls, scheduling, Jira, insights will appear here

State history will appear here

Debug data appears here

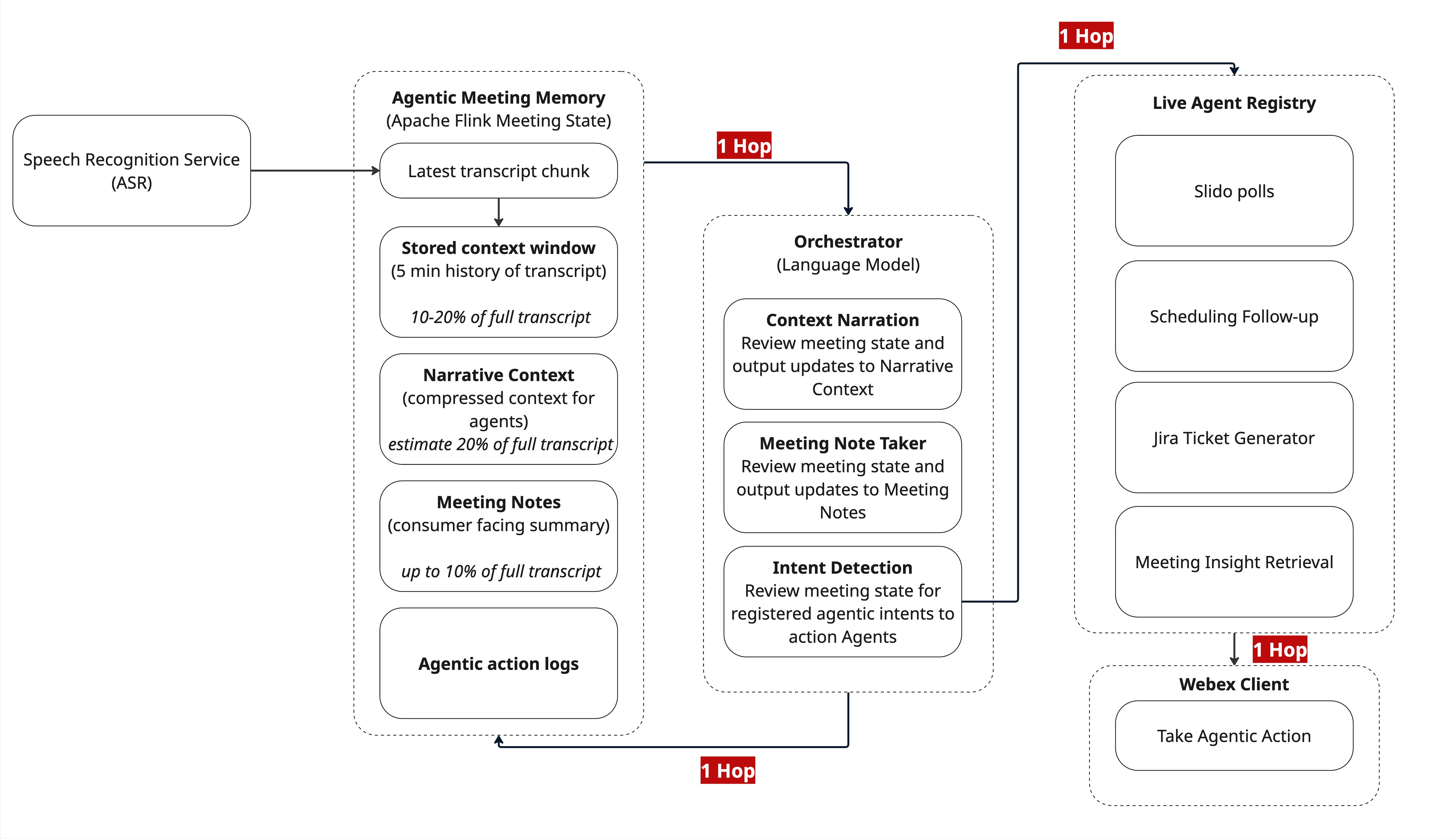

🏗️ Live Agentic Framework Architecture

Stateful Orchestration for Real-Time Meeting Intelligence

🔄 End-to-End Architecture Comparison

Two competing approaches to live meeting intelligence. The Stateless Observer (current) vs the Stateful Orchestrator (proposed).

The "Live Agentic Framework" (Stateful) — The "Moat"

🔍 Click image to view fullscreen

📐 Architecture Flow

ASR → Flink (Meeting Memory) → Orchestrator (LLM) → Agent Registry → Webex Client

The Orchestrator is the single consumer; adding a new agent just requires registering it in the registry. The data flow doesn't change.

🔑 Key Architecture Properties

- 🧠 "Compute-to-Data" Model: The Orchestrator (LLM) sits directly next to the Meeting Memory (Flink). It reasons over the entire context in-memory without making network calls to a database.

- 📍 Single Source of Truth: The "Agentic Meeting Memory" handles ASR corrections natively. If the ASR changes "Site-level" to "Org-level," the memory updates instantly before the Orchestrator sees it.

- 📈 O(1) Complexity: Adding 50 new agents adds zero additional load to the database or the ASR stream. The Orchestrator filters intents once and dispatches only when necessary.

- ⚡ Real-Time Latency: < 2 seconds end-to-end, designed for real-time intervention during live meetings.

The Agentic Meeting Memory + Orchestrator operate as a unified in-memory reasoning engine.

Live Agent Registry allows adding new agents with zero infrastructure changes.

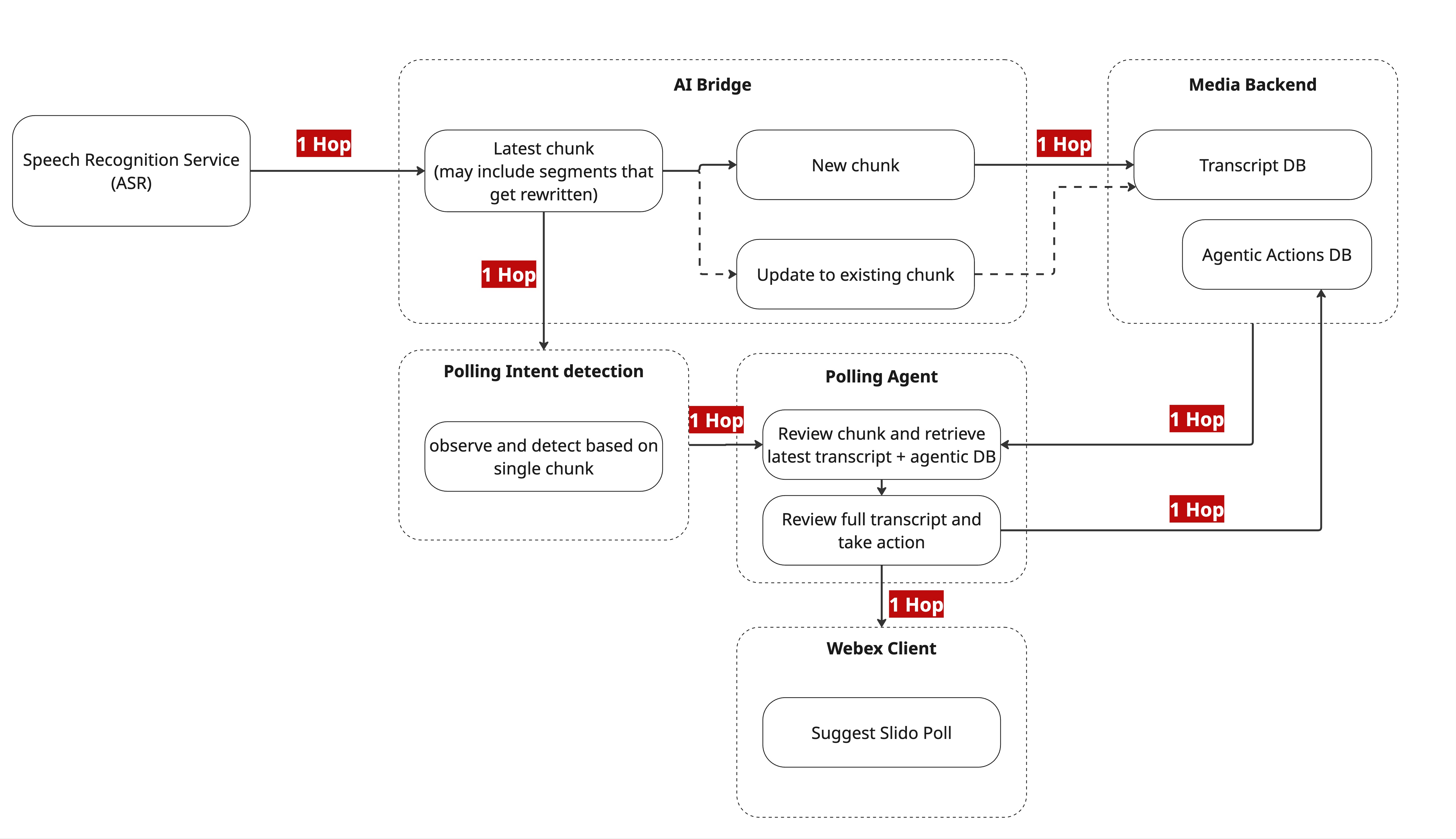

The "Observer" Architecture (Stateless) — The "Trap"

🔍 Click image to view fullscreen

📐 Architecture Flow

ASR → AI Bridge → DB → Intent Detection → Polling Agent → DB (Read) → DB (Write) → Client

Every new agent requires its own detection logic, its own database read to get context, and its own database write.

⚠️ Critical Problems

- 🐘 "Thundering Herd" Problem: Every agent (Polling, Jira, Scheduling) must independently query the "Transcript DB" for context. If you have 10 agents, you have 10x the database load for every sentence spoken.

- 🏃 Race Conditions: The "Polling Agent" reads from the DB while the "AI Bridge" is writing to it. If an ASR correction happens (e.g., "Wait, don't launch the poll"), the Agent might read the old, wrong text and launch it anyway.

- 🐌 High Latency: The multi-hop chain (Bridge → API → Agent → DB) introduces 10-15 seconds of lag, making "real-time" intervention impossible.

- 🔒 Siloed Logic: The "Polling Intent detection" is hardcoded. Adding a "Jira Agent" requires building a whole new detection pipe, duplicating effort.

Multiple round-trips to Media Backend/DB on every agent decision.

Polling Agent cannot see ASR corrections — may act on stale/wrong data.

📊 Architecture Comparison

🔬 This PoC: Live Implementation

This demo implements the stateful architecture end-to-end:

┌──────────────┐ ┌───────────────┐ ┌─────────────────────────────────────┐

│ Browser │────▶│ Google Cloud │────▶│ Apache Flink │

│ Transcript │ │ Pub/Sub │ │ ┌───────────────────────────────┐ │

│ Generator │ │ (Message Bus)│ │ │ StatefulNoteProcessor │ │

└──────────────┘ └───────────────┘ │ │ • keyed by meeting_id │ │

│ │ • stores last N chunks │ │

│ │ • maintains topic/intent │ │

│ │ • manages MoM state │ │

│ └───────────────┬───────────────┘ │

│ │ │

│ ┌───────▼───────┐ │

│ │ LLM Proxy │ │

│ │ (GPT-4/etc) │ │

│ └───────┬───────┘ │

└──────────────────┼──────────────────┘

│

┌───────▼───────┐

│ Centrifugo │

│ (WebSocket) │

└───────┬───────┘

│

┌───────▼───────┐

│ Browser │

│ (This UI) │

└───────────────┘

📝 Transcript Generator

Browser + Node.js

- Simulates live ASR stream

- Uploads VTT/Webex transcripts

- Configurable playback speed

📬 Pub/Sub

Google Cloud Pub/Sub

- Fully managed message bus

- At-least-once delivery

- Decouples producers from consumers

⚡ Flink Orchestrator

Apache Flink (Java)

- Keyed state per meeting_id

- In-memory context window

- Manages MoM, intents, topics

- Calls LLM for analysis

🤖 LLM Proxy

Python Flask + GPT-4

- Configurable model selection

- Prompt management

- Token tracking & cost calc

📡 Centrifugo

WebSocket Server

- Per-meeting channels

- Real-time push to clients

- Sub-100ms delivery

⏱️ Observed Latency (This PoC)

💡 LLM inference dominates. With faster models (SLM), total could drop to <500ms.

🎯 Intent Detection Capabilities

The orchestrator detects intents in real-time via a configurable Intent Registry:

Poll Suggestion

Detects debates/votes and suggests creating a poll

Scheduling

Identifies follow-up meeting requests

Knowledge Fetch

Detects questions about past decisions

Action Items

Captures commitments with owners/deadlines

Decisions

Logs key decisions made during meeting

Open Questions

Tracks unresolved questions for follow-up

📚 Reference Documents

🛠️ Tech Stack

✏️ Prompt Editor & Tester

Edit prompts and test them with sample data to see LLM response

🤖 Global LLM Model

🌐 Applies to ALL requests — This model is used for live meeting processing and all API calls. Changes are saved to the server.

⚡ Prompt Architecture

Using single prompt with all 5 steps. Best for prompt refinement.

📝 System Prompt

📝 User Prompt Template

{previous_summary}, {previous_topics}, {previousIntents},

{context_window}, {current_chunk}

🧪 Test Sample Data

Edit sample data matching prompt placeholders, then click "Test Prompt"

Click "Preview Request" or "Test Prompt" to see the JSON request body

📤 LLM Response

Click "Test Prompt" to see LLM response